Understanding And Fixing Runtime Error: CUDA Error - Device-Side Assert Triggered

Have you ever encountered the frustrating error message "RuntimeError: CUDA error: device-side assert triggered" while working with deep learning models or GPU-accelerated computations? This cryptic error can bring your machine learning projects to a screeching halt, leaving you puzzled about what went wrong and how to fix it. In this comprehensive guide, we'll dive deep into what causes this error, why it occurs, and most importantly, how you can resolve it to get your computations running smoothly again.

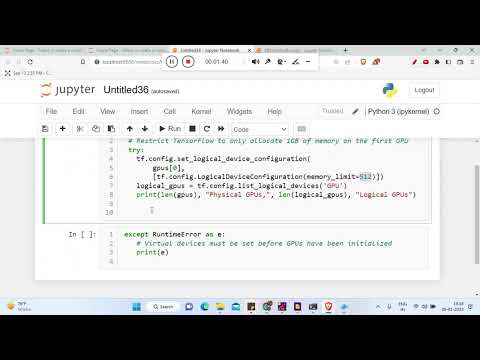

The device-side assert triggered error is particularly common among developers working with PyTorch, TensorFlow, and other deep learning frameworks that leverage CUDA for GPU acceleration. It's essentially a signal that something went wrong during the execution of your code on the GPU, and a specific assertion condition failed. But don't worry - with the right understanding and troubleshooting approach, you can overcome this hurdle and continue building your AI applications without interruption.

What is a Device-Side Assert Triggered Error?

A device-side assert triggered error occurs when an assertion statement in your CUDA kernel code evaluates to false during GPU execution. In simpler terms, it's like a checkpoint in your code that says "this condition must be true for the program to continue." When this condition fails on the GPU device, the entire computation halts and throws the RuntimeError we see in Python.

CUDA (Compute Unified Device Architecture) is a parallel computing platform and API model created by NVIDIA. It allows developers to use NVIDIA GPUs for general purpose processing. When you write code that runs on the GPU, you're essentially creating parallel computations that execute simultaneously across thousands of GPU cores. The device-side assert is a safety mechanism that helps catch logical errors in your GPU code by verifying that certain conditions hold true during execution.

Common Causes of Device-Side Assert Errors

Understanding the root causes of this error is crucial for effective troubleshooting. Let's explore the most common scenarios that lead to device-side assert failures:

Array Index Out of Bounds is perhaps the most frequent culprit. When your code tries to access an element outside the allocated memory range of an array or tensor, the GPU throws an assert error. For example, if you have a tensor of size 100 and your code attempts to access index 150, this will trigger the error.

Division by Zero is another common cause. If your CUDA kernel performs a division operation where the denominator can potentially be zero, the GPU will assert and stop execution. This often happens in normalization operations or when implementing custom loss functions.

Invalid Tensor Shapes can also trigger this error. When performing operations that require tensors to have specific dimensional properties (like matrix multiplication), providing tensors with incompatible shapes will cause the device-side assert to fail.

NaN or Inf Values in your computations can lead to assert failures. These special floating-point values can propagate through calculations and trigger assertions when they appear in unexpected places, such as in loss functions or activation outputs.

How to Debug Device-Side Assert Errors

Debugging CUDA errors requires a systematic approach, as the error messages often don't provide specific line numbers or detailed context. Here are effective strategies to identify and fix these issues:

Enable CUDA Error Logging is the first step in your debugging journey. Most deep learning frameworks allow you to set environment variables that provide more detailed error information. For PyTorch, you can use torch.cuda.set_cerr_enabled(True) to get more verbose error messages.

Simplify Your Code by creating a minimal reproducible example. Remove complex parts of your code until you isolate the exact operation causing the assert. This process of elimination helps narrow down the problematic section without getting lost in a sea of code.

Check Tensor Dimensions and Shapes before operations. Implement assertions in your Python code to verify that tensor shapes match your expectations before passing them to CUDA operations. This helps catch shape mismatches early in the debugging process.

Use Gradient Checking if you're working with neural networks. Sometimes device-side asserts occur during backpropagation due to numerical instability. Gradient checking can help verify that your gradients are computed correctly and don't contain NaN or Inf values.

Step-by-Step Troubleshooting Guide

When you encounter a device-side assert error, follow this systematic troubleshooting process:

Step 1: Reproduce the Error Consistently. Try to identify the exact input or model configuration that triggers the error. This consistency is crucial for effective debugging.

Step 2: Add Validation Checks. Insert Python assertions before your CUDA operations to validate tensor shapes, values, and ranges. For example, check that tensor sizes match expected dimensions and that values are within valid ranges.

Step 3: Isolate the Problem. Comment out or disable parts of your code to isolate which specific operation is causing the assert. Start with the most recent changes or the most complex operations.

Step 4: Check Data Preprocessing. Often, device-side asserts are triggered by bad input data. Verify that your data preprocessing steps aren't creating invalid values or shapes that could cause GPU computations to fail.

Step 5: Review CUDA Kernel Code. If you're writing custom CUDA kernels, carefully review your indexing logic, boundary conditions, and arithmetic operations. Pay special attention to loops and array accesses.

Preventing Device-Side Assert Errors

Prevention is always better than cure. Here are strategies to minimize the occurrence of device-side assert errors in your projects:

Implement Robust Input Validation in your code. Before passing data to GPU operations, validate that inputs meet all requirements. This includes checking for NaN values, verifying tensor shapes, and ensuring numerical ranges are appropriate.

Use Safe CUDA Practices when writing custom kernels. Always check array bounds, handle edge cases, and implement proper error checking. Consider using CUDA's built-in error checking mechanisms and debugging tools.

Monitor Training Progress with logging and visualization. Sometimes device-side asserts occur due to data issues that manifest only after many training iterations. Regular monitoring can help catch these issues early.

Keep Dependencies Updated as newer versions of deep learning frameworks often include better error handling and debugging capabilities. Also, ensure your CUDA toolkit and GPU drivers are up to date.

Advanced Debugging Techniques

For more complex scenarios, consider these advanced debugging approaches:

CUDA-MEMCHECK is a powerful tool that can detect various memory errors, including out-of-bounds accesses and misaligned memory accesses. It's particularly useful when you suspect memory-related issues are causing your device-side asserts.

NVIDIA Nsight provides comprehensive debugging and profiling tools for CUDA applications. It allows you to set breakpoints, inspect variables, and step through GPU code, making it invaluable for complex debugging scenarios.

Mixed Precision Training can sometimes trigger device-side asserts due to numerical instability. If you're using mixed precision, try switching to full precision to see if the error persists, which can help isolate precision-related issues.

Distributed Training Considerations - when working with multi-GPU setups, device-side asserts can sometimes be caused by communication issues between GPUs. Ensure your distributed training setup is correctly configured and that all GPUs have consistent states.

Real-World Examples and Solutions

Let's look at some practical examples of device-side assert errors and their solutions:

Example 1: Matrix Multiplication Shape Mismatch

# Problematic code A = torch.randn(10, 5).cuda() B = torch.randn(10, 5).cuda() C = torch.matmul(A, B) # This will trigger an assert Solution: Verify matrix dimensions before multiplication. The number of columns in the first matrix must equal the number of rows in the second matrix.

Example 2: Batch Normalization with Zero Variance

# Problematic code x = torch.randn(32, 10).cuda() x[:, 0] = 0 # First feature is constant mean, var = torch.var_mean(x, dim=0) x_norm = (x - mean) / torch.sqrt(var + 1e-5) # May trigger assert if var is zero Solution: Add epsilon to variance calculation and check for zero variance cases before normalization.

Best Practices for CUDA Development

To minimize device-side assert errors and improve your overall CUDA development experience:

Write Defensive Code that anticipates potential issues. Include checks for edge cases, validate inputs, and handle exceptions gracefully. This proactive approach can prevent many common errors.

Use Version Control effectively. When debugging complex issues, being able to revert to known good states and track changes systematically is invaluable. Git or similar systems are essential tools.

Document Your Code thoroughly, especially when working with complex CUDA operations. Clear documentation helps you and others understand the assumptions and requirements of your code, making debugging easier.

Test with Diverse Data to ensure your code handles various edge cases. Include tests with extreme values, boundary conditions, and unexpected inputs to verify robustness.

When to Seek Help

Sometimes, despite your best efforts, device-side assert errors can be particularly stubborn. Here are signs that you might need external assistance:

Persistent Errors that you can't reproduce consistently or that appear only in specific environments might indicate deeper issues with your hardware, drivers, or framework installation.

Complex Custom Kernels with sophisticated logic can be challenging to debug. If you're writing custom CUDA kernels and encountering device-side asserts, consider seeking help from CUDA experts or the developer community.

Performance-Critical Applications where debugging time is expensive might warrant professional assistance or consultation with experienced CUDA developers.

Conclusion

The RuntimeError: CUDA error: device-side assert triggered error, while frustrating, is a valuable debugging tool that helps catch logical errors in your GPU-accelerated code. By understanding its causes - from array bounds violations to numerical instabilities - and implementing systematic debugging approaches, you can effectively resolve these issues and build more robust deep learning applications.

Remember that device-side asserts are essentially your GPU's way of saying "something doesn't look right here." Rather than viewing them as obstacles, treat them as helpful indicators that guide you toward better, more reliable code. With the strategies outlined in this guide, you're now equipped to tackle these errors head-on and continue your journey in GPU-accelerated computing with confidence.

The key to success is patience, systematic debugging, and a willingness to learn from each error encountered. As you gain more experience with CUDA development, you'll find that these errors become less frequent and easier to resolve, allowing you to focus on building innovative AI applications that leverage the full power of GPU computing.