8 Bit Vs 16 Bit: Decoding Digital Color Depth

Ever wondered why your old Nintendo games look so pixelated and vibrant, while modern photos and videos seem to blend colors seamlessly? The answer often lies in a fundamental concept of digital imaging: bit depth. The debate of 8 bit vs 16 bit isn't just for tech geeks; it's a crucial choice that impacts everything from the video games you play to the professional films you watch and the designs you create. But what do these numbers really mean, and when does one triumph over the other?

This isn't about choosing a "better" technology in a vacuum. It's about right tool for the right job. 8-bit and 16-bit represent two different philosophies in how digital systems store and display color and tonal information. One prioritizes efficiency and broad compatibility, while the other追求极致 fidelity and creative flexibility. Understanding this 8 bit vs 16 bit comparison is key for anyone working with digital visuals, from a casual smartphone photographer to a Hollywood colorist. Let’s break it down.

The Foundation: What Exactly is "Bit Depth"?

Before diving into the battle, we need to understand the battlefield. Bit depth refers to the number of bits (binary digits) used to represent the color information for a single pixel in a digital image or video frame. Think of it as the number of "slots" or "buckets" available to describe a color's intensity. Each bit can be either a 0 or a 1, so the number of possible combinations grows exponentially with each added bit. This directly determines the number of distinct colors or shades a system can produce.

In color models like RGB (Red, Green, Blue), which is standard for most digital displays, bit depth is typically applied per channel. So, an "8-bit" image usually means 8 bits for red, 8 for green, and 8 for blue. The total color palette is the product of the possibilities for each channel. This foundational knowledge is critical for any 8 bit vs 16 bit discussion.

The Math of Color: 8-Bit's 16.7 Million vs. 16-Bit's 281 Trillion

Let's do the quick math. For an 8-bit per channel system:

- Red: 2^8 = 256 shades

- Green: 2^8 = 256 shades

- Blue: 2^8 = 256 shades

- Total Colors = 256 x 256 x 256 = 16,777,216 (approximately 16.7 million).

Now for 16-bit per channel:

- Red: 2^16 = 65,536 shades

- Green: 2^16 = 65,536 shades

- Blue: 2^16 = 65,536 shades

- Total Colors = 65,536 x 65,536 x 65,536 = 281,474,976,710,656 (approximately 281 trillion).

The difference isn't just incremental; it's astronomical. 16-bit offers over 16,000 times more color values than 8-bit. But does your eye or your monitor even see that difference? That's where practical application comes in.

8-Bit: The Ubiquitous Workhorse of the Digital World

8-bit color depth is the default, the standard, the everywhere. It's the language of the internet, social media, most consumer digital cameras, smartphone screens, and standard definition video. Its dominance is due to a perfect storm of historical precedent, file size efficiency, and sheer practicality.

Why 8-Bit Became the Global Standard

The 8-bit standard (often called "True Color") was cemented in the early days of personal computing and graphics. It provided a massive leap from the limited 256-color palettes of earlier systems (like the original VGA standard's 256 colors from a larger palette) to what was then perceived as "photorealistic" color for digital displays. File size and bandwidth are its greatest allies. An 8-bit image file is significantly smaller than its 16-bit counterpart, making it ideal for fast loading websites, email attachments, and streaming video where data caps and speed are concerns.

For the vast majority of everyday use cases—viewing photos on Instagram, watching a YouTube video, designing a simple web banner—8-bit color is more than sufficient. The human eye has limitations, and most consumer monitors (even many 4K monitors) are only 8-bit per channel native, sometimes using dithering to simulate more colors. You're rarely losing visible information when working in 8-bit for these purposes.

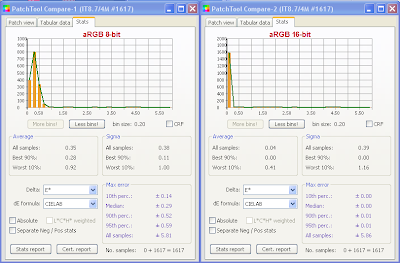

The Critical Limitation: Banding

So, what's the big problem with 8-bit? Banding. Also known as posterization, banding occurs when there aren't enough color shades to represent a smooth gradient. Instead of a seamless transition from sky blue to white in a cloud, you see distinct, hard "bands" of color. This is most noticeable in large areas of subtle shade changes, like sunsets, shaded walls, or smooth gradients in graphic design. 8-bit's 256 shades per channel can sometimes fall short, especially when making significant adjustments in photo editing software like Adobe Lightroom or Photoshop. This is the primary technical argument in the 8 bit vs 16 bit debate for professionals.

16-Bit: The Professional's Sanctuary for Fidelity

Enter 16-bit color depth, often referred to as "High Color" or "Deep Color." This is the domain of professional photographers, high-end videographers, visual effects (VFX) artists, and color graders. Its purpose is singular: to preserve the maximum amount of tonal and color information from capture to final output, providing an immense safety net for intensive editing.

The Editing Safety Net: Why Pros Swear By It

Imagine you take a stunning landscape photo in RAW format. Your camera's sensor captures a vast dynamic range—the difference between the darkest shadows and brightest highlights. When you import this RAW file into an editor, you're often working with a 16-bit per channel image (even if your final delivery is 8-bit). This 16-bit workspace acts as a massive buffer. You can dramatically pull up shadows, crush highlights, adjust saturation, and apply multiple filters without ever seeing the dreaded banding or noise that would plague an 8-bit file under the same stress.

Every major adjustment you make in post-processing reduces the available color information. Starting with 16-bit gives you a colossal 65,536 shades per channel to work with. You can make dozens of major edits and still have more than enough data left to produce a flawless 8-bit final image for the web or print. Starting with only 256 shades (8-bit) means your edits will quickly degrade image quality. For professionals, 16-bit is non-negotiable insurance against quality loss.

Beyond Photography: Video, CGI, and HDR

The 8 bit vs 16 bit conversation extends powerfully into motion. In video, 8-bit is standard for most consumer formats (like H.264/MP4). However, professional video codecs like ProRes, DNxHD, and RAW video formats often support 10-bit, 12-bit, or even higher bit depths. 10-bit video (1024 shades per channel) is becoming the new professional baseline, offering a massive improvement over 8-bit for color grading and HDR (High Dynamic Range) content.

In computer-generated imagery (CGI) and visual effects, 16-bit and even 32-bit floating-point pipelines are the norm. This allows for the insane dynamic range of light simulations (like a bright light source next to a dark shadow) without clipping. For HDR displays (which can show brighter highlights and deeper blacks), a higher bit depth is essential to encode the expanded range of luminance values smoothly, preventing banding in the bright or dark areas of the image.

The Practical Showdown: When to Choose Which?

Now for the million-dollar question: Should you use 8-bit or 16-bit? The answer is almost always "it depends." Let's map it out.

Use 8-Bit When:

- Delivering for the Web & Social Media: All browsers, apps, and social platforms are optimized for 8-bit JPEGs and PNGs. There's no benefit, only larger file sizes, in uploading a 16-bit TIFF.

- Working with Standard Video: Your final output is an 8-bit MP4 for YouTube or Instagram. You can edit in 16-bit and export to 8-bit, but if your source is 8-bit (like a smartphone video), you're limited.

- File Size is a Paramount Constraint: Archiving millions of photos, working on a machine with limited storage, or sending files to a client with slow internet.

- Your Monitor is 8-bit Native: If your display can't physically show more than 8-bit per channel, you won't see the extra data. (Many "10-bit" monitors use dithering to simulate it from an 8-bit panel).

- Quick, Simple Edits: Minor adjustments to a well-exposed JPEG. If you're just cropping and adding a filter, 8-bit is fine.

Use 16-Bit (or Higher) When:

- Professional Photo Editing: Any serious work in Lightroom, Capture One, or Photoshop where you'll be making significant exposure, color, or tonal curve adjustments.

- Working with RAW Files: Your camera's RAW file is inherently a high-bit-depth data container (often 12-bit or 14-bit native, mapped to 16-bit in software). You should preserve this depth throughout your workflow.

- Creating Graphics with Gradients: Designing logos, backgrounds, or UI elements with smooth color fades. 16-bit will eliminate banding risk entirely.

- High-End Video Production: Shooting log or RAW video formats, and performing color grading in DaVinci Resolve, Premiere Pro, or Final Cut Pro.

- Preparing for Large Format Print: billboards, fine art prints, or any output where the image will be viewed up close or on a high-quality printer with a wide gamut.

- Compositing and VFX: Combining multiple images, creating matte paintings, or any task where you need to manipulate pixel values extensively without degradation.

The Middle Ground: 10-Bit and 12-Bit

The 8 bit vs 16 bit dichotomy is simplifying a spectrum. 10-bit (1.07 billion colors) and 12-bit (68.7 billion colors) are incredibly important practical standards, especially in video. A 10-bit monitor/GPU pipeline is a fantastic sweet spot for many prosumer creators, offering a huge leap over 8-bit for gradient smoothness and color grading headroom without the file size bloat of full 16-bit per channel. Many modern mirrorless cameras and smartphones now offer 10-bit output over HDMI or in HEIF/HEIC photo formats.

Debunking Myths and Addressing Common Questions

"Can I see the difference between 8-bit and 16-bit on my screen?" Probably not on a standard 8-bit monitor. The difference is in the editing headroom, not necessarily the final displayed image if you export correctly. You'll see banding during editing on an 8-bit file that you won't on a 16-bit file.

"Does 16-bit mean my files are twice as big?" Not exactly. A 16-bit per channel image file is significantly larger—often 2-3 times the size of an 8-bit equivalent—because it stores more data per pixel. This impacts storage and workflow speed.

"Is 16-bit always better quality?" Only if you use it. If you shoot a JPEG (which is 8-bit) and then save it as a 16-bit TIFF, you haven't magically added information. You've just padded the existing 8-bit data. Garbage in, garbage out. 16-bit's power is only unlocked when starting from a high-bit-depth source (like a RAW file or a 10-bit video frame).

"What about bit depth in audio?" This is a related but separate concept! In audio, bit depth (e.g., 16-bit CD quality vs. 24-bit studio masters) determines the dynamic range and noise floor of the signal. The principles of "more bits = more possible values = less quantization noise" are similar, but the application is different.

The Future: Beyond 16-Bit and the Rise of HDR

As display technology races toward HDR (High Dynamic Range) and Wide Color Gamut (like Rec. 2020), the demands on bit depth intensify. HDR content spans a much wider range of brightness, from deep blacks to dazzling highlights. Encoding this range smoothly in a standard 8-bit (or even 10-bit) signal can lead to severe banding, especially in the bright or dark extremes. This is pushing the industry toward 12-bit and 16-bit pipelines for mastering and delivery in next-gen formats like Dolby Vision and HDR10+.

Furthermore, machine learning and AI upscaling tools (like those from Topaz Labs or Adobe) often perform better when fed higher bit-depth source data. The algorithm has more accurate information to work with, leading to cleaner, more believable results.

Conclusion: It's About the Workflow, Not Just the Number

The 8 bit vs 16 bit debate ultimately resolves to a single, powerful concept: workflow integrity. 8-bit is the efficient, universal language of final delivery and everyday use. It's the perfect endpoint. 16-bit (and its cousins 10-bit and 12-bit) is the expansive, forgiving, and information-rich language of creation and manipulation. It's the ideal starting point for professional work.

For the hobbyist snapping photos for Facebook, 8-bit is your friend. For the artist painstakingly grading a cinematic scene or retouching a high-end portrait, 16-bit is your essential foundation. The most critical mistake isn't choosing one over the other; it's using 8-bit during a heavy editing process that demands more, or unnecessarily bloating files with 16-bit data when 8-bit is the final destination. Understand your source material, your editing intent, and your final output, and let that guide your bit depth choice. In the grand scheme of digital visuals, both 8-bit and 16-bit are vital tools—just make sure you're using the right one for the job at hand.