PCI Express Error Counters: The Silent Killers Of System Performance And How To Tame Them

Have you ever experienced a mysterious system slowdown, an intermittent device disconnection, or a baffling blue screen of death with no obvious cause? While software bugs and driver conflicts are common suspects, the culprit might be hiding in the hardware layer you rarely see: your PCI Express (PCIe) error counters. These microscopic metrics, constantly tracked by your motherboard's chipset, are the early warning system for a failing connection between your CPU, GPU, SSD, and other expansion cards. Ignoring them is like ignoring the check engine light in your car—you might get lucky for a while, but a catastrophic failure is often just around the corner. This comprehensive guide will pull back the curtain on PCIe error counters, transforming you from a casual user into a proactive system diagnostician who can spot trouble before it spells disaster.

What Exactly Are PCI Express Error Counters?

At its core, the PCI Express bus is a high-speed serial communication standard that connects virtually every critical component in a modern computer, from the graphics card to the fastest NVMe SSDs. To ensure this communication remains reliable, the PCIe specification includes a robust error reporting mechanism known as Advanced Error Reporting (AER). AER is a capability within the PCIe configuration space that allows devices to report both correctable and uncorrectable errors to the operating system. PCI Express error counters are the specific registers that tally these events. Think of them as a detailed logbook kept by the PCIe root complex (usually your CPU or chipset), where each entry records a hiccup in the data stream—a single corrupted packet, a failed link training attempt, or a complete communication breakdown.

These counters exist for a reason: the physical layer of a PCIe link is astonishingly complex. It operates at gigahertz frequencies, over traces on a printed circuit board, through connectors, and across cables. At these speeds, even minor electrical noise, signal attenuation, or impedance mismatch can cause bits to be misinterpreted. The error counters quantify these imperfections. They are not just for server administrators; they are vital for anyone running a high-performance desktop, a gaming rig with a powerful GPU, or a workstation with multiple fast SSDs. A steadily increasing count of a specific error type is a clear indicator of a degrading physical connection, a flaky component, or an inadequate power delivery—problems that manifest as micro-stutters in games, dropped storage I/O, or unexplained system instability.

The Two Critical Families: Correctable vs. Uncorrectable Errors

Understanding the taxonomy of PCIe errors is the first step to diagnosis. The AER specification categorizes errors into two primary, fundamentally different buckets, and the counters track them separately.

Correctable Errors are minor, recoverable glitches. The PCIe protocol has built-in forward error correction (FEC) and automatic retry mechanisms. When a data packet (called a Transaction Layer Packet, or TLP) is corrupted in transit—often due to a single-bit flip from electrical noise—the receiving device can detect the error using a CRC check, request the sender to retransmit the packet, and the process is invisible to the operating system and applications. The Correctable Error Counter increments, but the system continues functioning normally. Examples include:

- Receiver Error: A non-fatal protocol violation detected by the receiver.

- Bad TLP: A packet with an invalid format.

- Bad DLLP: A corrupted data link layer packet (used for flow control and ACK/NACK).

- RELAY_NUM Rollover: An internal buffer management issue.

While "correctable" sounds harmless, a rapidly increasing count of these errors is a major red flag. It indicates the physical link is struggling to maintain clean signal integrity. It's like your car's engine misfiring occasionally but keeping going—it's a warning that a component (cable, slot, connector) is failing and will eventually cause an uncorrectable error.

Uncorrectable Errors are severe, non-recoverable failures. These errors are so grave that the PCIe link must be reset or the device disabled to prevent data corruption or system hangs. The operating system receives a fatal error notification, which often results in a system crash (BSOD on Windows, kernel panic on Linux) or the device being silently dropped from the system. The Uncorrectable Error Counter increments for events like:

- Unsupported Request: A device receives a command it doesn't understand.

- ACS Violation: A security violation in the Access Control Services.

- Unexpected Completion: A completion packet arrives for a non-existent request.

- Completer Abort: The target device aborts a request.

- Timeout: A request did not complete within the allocated time.

- Poisoned TLP: A packet with an error indicator that must not be used by the system.

An uncorrectable error is the system's last-ditch effort to prevent catastrophe. A single uncorrectable error event is a critical failure requiring immediate investigation. The counter helps determine if it was a one-time cosmic ray bit-flip (rare) or the symptom of a progressively worsening hardware problem (common).

Why Monitoring PCIe Error Counters Is Non-Negotiable for System Health

For the average user, these counters might as well be invisible. Operating systems like Windows and Linux do not typically expose them in a user-friendly dashboard. However, for IT professionals, system builders, and enthusiasts, monitoring these counters is a cornerstone of proactive hardware diagnostics. The statistics are telling: in large data centers, a significant percentage of hardware failures and performance anomalies are traced back to PCIe link layer issues, often originating from a single faulty cable or a marginally performing SSD. A 2021 study by a major cloud provider found that over 15% of storage device replacements in their fleet were preceded by a measurable increase in PCIe correctable error rates, even when the drive's own SMART data appeared healthy.

Ignoring these counters means flying blind. You might spend weeks chasing driver updates, reinstalling operating systems, or blaming software, all while a physical connection slowly degrades. The impact is real:

- Performance Degradation: Correctable errors force packet retransmissions. While the protocol recovers, it adds latency and reduces effective bandwidth. In a high-performance NVMe SSD array or a GPU-intensive compute task, this can manifest as lower-than-expected throughput and inconsistent performance.

- Intermittent Stability Issues: The classic "it works fine until it doesn't" problem. A system that crashes under load (like during a game or render) but is stable at idle is a prime candidate for a PCIe link failing under the increased electrical demands of full-speed operation.

- Silent Data Corruption (Theoretical): While AER is designed to prevent this, a cascade of errors or a buggy device implementation could potentially allow corrupted data to pass if error handling is overwhelmed. This is rare but a worst-case scenario.

- Premature Hardware Replacement: Without checking the root cause, you might replace a perfectly good GPU or SSD, only to have the new device fail in the same slot due to a faulty motherboard trace or power supply issue.

By regularly checking error counters, you shift from reactive troubleshooting ("Why did it crash?") to predictive maintenance ("The correctable error rate on my GPU slot has increased 300% in a month; I should reseat the card or check the power connector before it fails completely").

The Essential Toolkit: How to Access and Read PCIe Error Counters

Accessing these hidden counters requires command-line tools or specialized software. The methods differ between operating systems, but the principles are the same.

On Linux: The lspci and setpci Commands

Linux provides the most direct and powerful access. The primary tool is lspci, which lists all PCIe devices. To see the full AER capability and error counters for a specific device, you need its address (bus:device.function). For example:

lspci -s 0000:01:00.0 -vvv The -vvv flag shows very verbose output. Scroll down to find a section titled "Capabilities: [100 v1] Advanced Error Reporting". Within this block, you'll see registers like:

UE Status(Uncorrectable Error Status)CE Status(Correctable Error Status)Header Log(for uncorrectable errors, logs the corrupted packet header)

These are status registers, not simple counters. They are sticky bits; once an error occurs, the bit is set until explicitly cleared by the OS or a driver. To see if errors are accumulating, you need to check these bits, clear them (often by writing 1 to the bit position to clear it), and then monitor over time. Tools like aer-inject or scripts that periodically read and log the AER status can automate this. The setpci command can be used to clear specific bits programmatically.

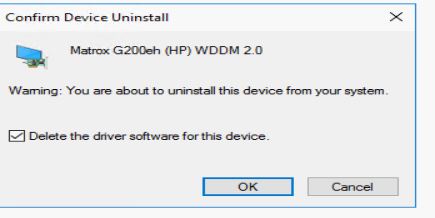

On Windows: The pciewh Tool and Event Viewer

Windows does not have a built-in command-line tool as comprehensive as lspci. However, Microsoft provides the pciewh tool (PCI Express Windows Handler) as part of the Windows Driver Kit (WDK). It's a command-line utility that can query AER information. For most users, the primary window into PCIe errors is the Windows Event Viewer.

Navigate to Windows Logs -> System. Look for critical events from sources like "PCI Express Port" or "Kernel-Power" with event IDs such as 17 (AER correctable error) or 18 (AER uncorrectable error). The event details often include the device's PCI path (VEN_xxxx&DEV_xxxx) and a description of the error type (e.g., "Receiver Error"). While not a live counter, a pattern of these events is a definitive sign of trouble. Third-party utilities like HWiNFO64 (in its sensor-only mode, under the "PCI Express" section) can also display some AER status information for supported chipsets.

Vendor-Specific and Enterprise Tools

- GPU Vendors: NVIDIA's

nvidia-smiand AMD'srocm-smihave limited diagnostics but focus on the GPU itself, not the PCIe link. - Storage Vendors: Tools from Samsung (Magician), Crucial (Storage Executive), or Intel (SSD Toolbox) often include diagnostics that may indirectly flag PCIe link issues if they cause drive timeouts.

- Server/Enterprise: In data centers, IPMI (Intelligent Platform Management Interface) and Redfish APIs often expose AER logs. BMCs (Baseboard Management Controllers) like those from Dell iDRAC, HPE iLO, or Supermicro can collect and report these errors out-of-band, even if the main OS is crashed. This is critical for remote management.

Decoding the Error Codes: A Practical Field Guide

Once you have access to the error status, what do you see? The AER specification defines specific bit positions for each error type. Here’s a practical interpretation guide for the most common culprits you'll encounter in the wild.

The Most Common Correctable Error: Receiver Error (Bit 0)

This is the workhorse of correctable errors. It signifies that the physical layer receiver detected a violation of the PCIe electrical or protocol specifications. A high, steady rate of Receiver Errors almost always points to a physical layer problem:

- A poorly seated card: Reseating the GPU, SSD, or other card can often dramatically reduce or eliminate these errors by improving the physical contact in the slot.

- Dust and debris: Oxidation or grime on the gold fingers of a card or inside the PCIe slot can increase electrical resistance and cause signal degradation. Compressed air cleaning is a simple fix.

- Inadequate cooling: A component (especially a GPU or high-end SSD) running excessively hot can cause slight expansion/contraction of materials, momentarily affecting signal integrity. Improving case airflow can help.

- A marginal cable or riser: For GPUs using PCIe riser cables (common in mining rigs or SFF builds), the cable is the most common point of failure. A kinked, low-quality, or poorly shielded cable will generate a storm of correctable errors. Swapping the cable is the first test.

The Uncorrectable Alarm: Completer Abort & Unsupported Request (Bits 6 & 0)

An Uncorrectable Completer Abort means the target device (e.g., your SSD) received a request it could not complete and aborted the transaction. This could be due to a firmware bug in the device, but more frequently, it's a symptom of the device not getting enough resources (power, or even PCIe link training failing due to instability). Unsupported Request is similar—the device got a command it doesn't recognize, which can happen if there's a serious protocol violation earlier in the chain, often from a flaky link. A single occurrence might be a driver issue. Multiple occurrences in a short time frame mean the device or the link to it is fundamentally unhealthy.

The Silent Degrader: AtomicOp Request and ECRC Errors

- AtomicOp Request (Correctable, Bit 4): This relates to atomic operations (read-modify-write). Errors here are less common but can be triggered by specific chipset/CPU combinations under heavy multi-threaded load. It's often a chipset errata or a very marginal signal.

- ECRC Error (Both): End-to-End CRC is a higher-layer checksum. An ECRC Error (correctable or uncorrectable) means a packet's integrity was compromised somewhere between the sender and receiver, and the error was caught at the final destination. This is a strong indicator of a problem in the link between two specific devices, not necessarily at the endpoints.

A Practical Troubleshooting Workflow: From Logs to Hardware

Armed with knowledge and tools, here is a systematic, least-invasive-first approach to diagnosing a system showing PCIe error symptoms or with known error counter increments.

Step 1: Document and Baseline.

Before touching anything, capture the current state. On Linux, run lspci -vvv and save the output for all relevant devices (GPU, NVMe drives). Note the AER status bits. On Windows, clear the Event Viewer log and reproduce the problem (run a stress test like FurMark for GPU, CrystalDiskMark for SSDs). Then check for new PCIe events. Establish a baseline: are errors happening at idle, or only under load?

Step 2: The Software Sweep.

- Update Drivers: Ensure you have the latest stable chipset drivers from your motherboard vendor and the latest firmware/drivers for your PCIe devices (GPU, SSD). This eliminates known software bugs.

- Check Power Settings: In the OS power plan, ensure PCIe link state power management (like ASPM) is either set to "Off" for testing or to the manufacturer's recommended setting. Aggressive power saving can sometimes cause link instability on marginal hardware.

- Disable Overclocks: Temporarily revert any GPU, CPU, or memory overclocks. An unstable overclock can manifest as PCIe protocol errors.

Step 3: The Physical Inspection and Reseat.

- Power down, unplug, and open the case.

- Reseat all PCIe cards. Remove and firmly reinsert the GPU, SSDs, and any add-in cards. This is the single most effective fix for a large percentage of error cases.

- Inspect and clean. Use compressed air to blow out dust from the PCIe slots and from the card's edge connectors. Look for any visible damage, bent pins (on the slot, not the card!), or discoloration.

- Check power cables. For GPUs and some high-end SSDs, ensure all required PCIe power cables from the PSU are securely plugged in. A loose or insufficient power connection can cause the device to malfunction at full speed.

Step 4: Isolate the Faulty Link.

If errors persist, you need to find which link is bad. The AER error message or the lspci output often identifies the port where the error was detected (e.g., "PCI Express Root Port" or "Upstream Port"). This points to the link between that port and the device connected to it.

- Swap slots: If possible, move the suspect card (e.g., the GPU) to a different PCIe x16 slot (usually controlled by a different root port). If the errors follow the card, the card is likely faulty. If the errors stay with the slot, the motherboard's trace or the slot itself is suspect.

- Swap devices: Move a known-good card (like a secondary GPU or a different NVMe drive) into the suspect slot. If the new device starts logging errors, the slot or its associated power/clock is bad.

- Test with minimal hardware. Boot with only the essential components: motherboard, CPU, one stick of RAM, and the integrated graphics (if available) or a single, known-good low-power GPU. Then add devices back one by one, monitoring error counters after each addition.

Step 5: The Cable and Riser Check (For GPU).

If you are using a PCIe riser cable (especially common in small form factor builds or mining setups), this is your prime suspect. Riser cables are notorious for causing signal integrity issues, particularly at PCIe 4.0 and 5.0 speeds. Test by removing the riser and connecting the GPU directly to the motherboard. If the errors vanish, the riser cable is defective or of insufficient quality for your link speed. Replace it with a high-quality, well-shielded cable rated for your PCIe generation.

Step 6: Power Supply and Environmental Factors.

- PSU Health: A failing or underpowered PSU can cause voltage droop on the 12V rail (which powers PCIe slots via the motherboard), leading to instability. Test with a known-good, higher-wattage PSU if available.

- Temperature: Use monitoring software (HWInfo, GPU-Z) to check component temperatures under load. A GPU or SSD running at 90°C+ may be thermally throttling or experiencing expansion-related link issues. Improve case airflow.

- Motherboard BIOS: Update to the latest BIOS. BIOS updates often contain PCIe link training and stability fixes.

Proactive Measures: Building a Resilient PCIe Ecosystem

Prevention is always better than cure. When building or upgrading a system, consider these best practices to minimize future PCIe error risks.

- Match Speeds Appropriately: A PCIe 4.0 SSD in a PCIe 3.0 slot will work, but the link will train at the lower speed. This is fine. The problem arises when a link fails to train at its intended speed and falls back repeatedly, logging errors. Ensure your motherboard and CPU support the generation of your devices.

- Prioritize Slot Wiring: On most consumer motherboards, the primary PCIe x16 slot (the top one) is directly connected to the CPU with the most robust traces. Secondary slots often share bandwidth or connect through the chipset, which can be more susceptible to noise. Install your primary GPU in the top slot.

- Invest in Quality Cables and Risers: Never cheap out on a PCIe riser cable for a high-performance build. Look for cables with ferrite cores, heavy shielding, and positive reviews regarding stability at your target PCIe generation. For direct connections, ensure the card is fully inserted and the slot's retention clip is engaged.

- Manage Heat Aggressively: High-end GPUs and SSDs generate significant heat. Ensure your case has good airflow (intake and exhaust) and that heatsinks on these components are clean. Thermal throttling can sometimes mask as PCIe link issues.

- Power is Paramount: Use a high-quality, sufficiently powerful PSU from a reputable brand. The 12V rail(s) must be stable. For multi-GPU or multiple high-end SSD systems, calculate power draw with headroom to spare.

- For Enterprise/Data Center: Implement automated logging of AER events via IPMI/Redfish. Set thresholds for correctable error rates (e.g., more than 100 in an hour on a given port) to generate automated tickets for investigation before an uncorrectable failure occurs.

The Horizon: PCIe 5.0, 6.0, and the Future of Error Management

As we march toward PCIe 5.0 (32 GT/s) and the upcoming PCIe 6.0 (64 GT/s with PAM4 signaling), signal integrity challenges become exponentially harder. The margin for error shrinks dramatically. At these speeds, even the slightest impedance mismatch, a microscopic solder joint void, or a marginally compliant cable can cause a flood of errors. This makes PCIe error counters not just useful, but absolutely critical for system validation and ongoing health monitoring.

Future system management will likely see deeper integration of AER data. We can anticipate:

- OS-Level Dashboards: Operating systems may incorporate a user-friendly "PCIe Health" tab in system information panels.

- AI-Driven Anomaly Detection: Machine learning models running on the BMC or in the OS could learn the normal "error fingerprint" of a healthy system and flag subtle, trending increases in specific error types long before they cause a crash.

- Predictive Failure Analytics: In server fleets, correlating AER data with other telemetry (temperature, power, workload) will allow for predictive replacement of components like riser cables or even entire server nodes before they impact workload SLAs.

Conclusion: Your System's Canary in the Coal Mine

PCI Express error counters are the unsung sentinels of your computer's hardware health. They operate silently in the background, recording every microscopic stumble in the high-speed dance of data between your components. While a single correctable error is harmless, a persistent pattern is a clarion call—a clear sign that a physical connection is deteriorating. An uncorrectable error is a system-level emergency, a final warning that something has gone critically wrong.

The power to interpret these counters transforms you from a passive user into an active system custodian. By incorporating simple checks—using lspci on Linux, scanning the Windows Event Viewer, and performing methodical hardware isolation—you can diagnose the root cause of frustrating instability, avoid costly and unnecessary part replacements, and extend the reliable life of your system. In our era of ever-increasing speeds with PCIe 4.0, 5.0, and beyond, paying attention to these foundational error metrics isn't just advanced troubleshooting; it's an essential practice for anyone who values a stable, high-performance, and truly reliable computing experience. Start checking your counters today—your future self, and your system, will thank you for it.